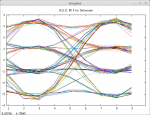

The "-a" option is used for disc tap input. You should also add a "-g" (gain) option to tune the audio levels (utilizing the eye diagram, aka the "datascope" plot) to obtain the correct signal levels needed by the demodulator.

I also use the "-A" option with a high-performance homebrew "455 KHz to 24 KHz IF downconverter" which outputs a complex (I and Q) signal at a (nominal) IF frequency of 24 KHz (with the "-c" option specifying the IF frequency to OP25). This handles "LSM/CQPSK" nicely -- something that's not possible with discriminator style demodulation.

Why would you want to use discriminator audio when you can have a frequency agile SDR that can be used to follow trunked radio systems?

The host receiver that I use (with "-H") is an old 1980's PRO-2006. As it's equipped with the OS-456, it's quite frequency-agile!

The reason for using the PRO-2006 instead of, say, the RTL-SDR is that the 2006 blows the doors off of the SDR in terms of dynamic range. I estimate there are approx. 15 or 20 stations I can hear perfectly on the 2006 (regardless of whether the disctap or the IF tap is used) whose presence *can't even be detected* on the SDR.

If the goal is to get performance that approaches what a "real vendor system radio" can do, the architecture of the receiver hardware used is very important. Not everything can be accomplished via software alone...

73

Max

p.s. edited to add: Also, inputting the signal at audio sampling rates requires several orders of magnitude less CPU horsepower than the typical SDR -- KHz as opposed to MHz...